Kognix

AI-powered knowledge workspace that lets you chat with PDFs, notes and URLs via retrieval-augmented generation.

What needed solving.

How I built it.

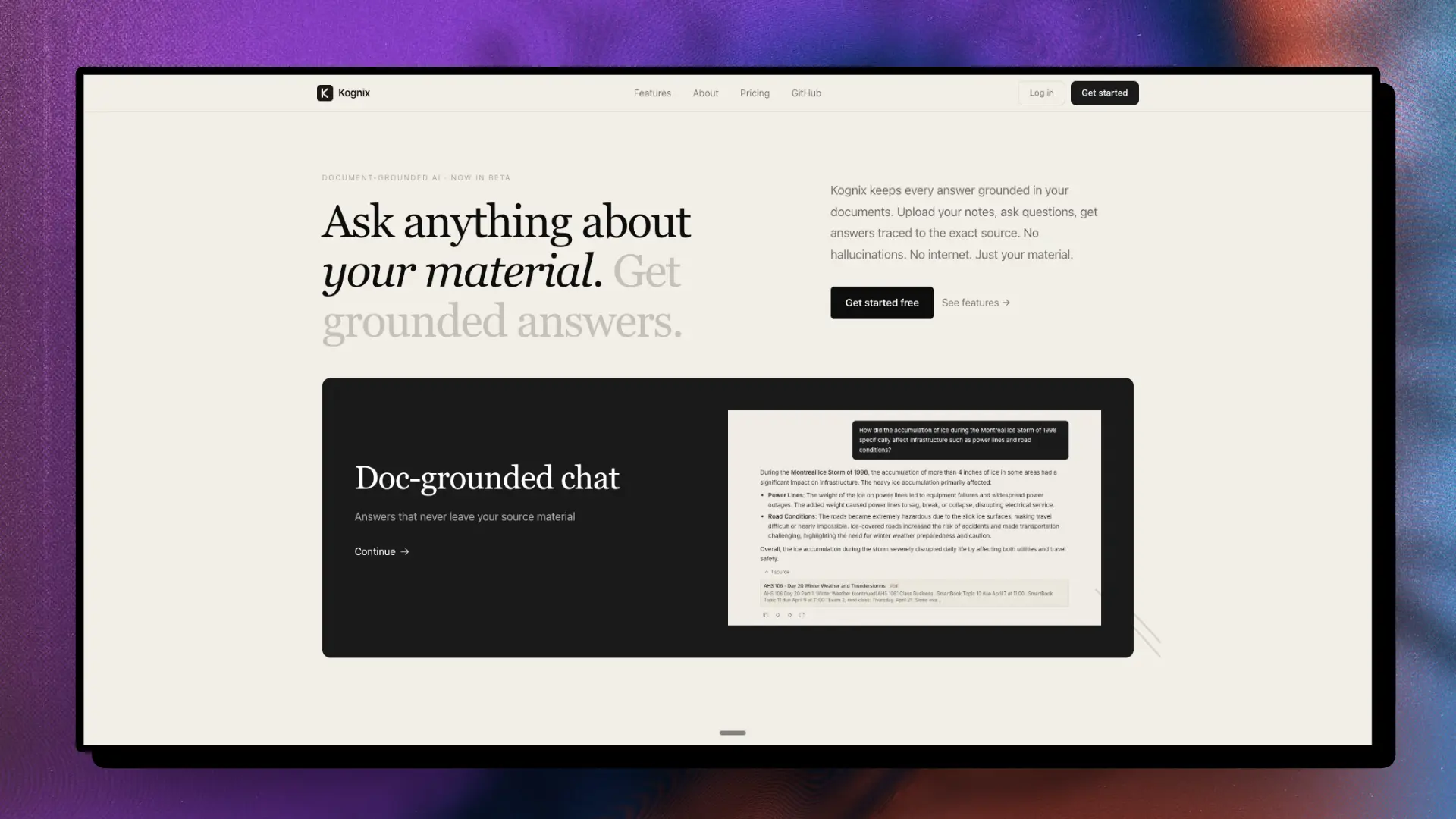

Kognix is a focused RAG workspace: ingest PDFs, notes and URLs, then ask questions whose answers are grounded in your own sources, with citations back to the original document. A free tier lowers the barrier to try it; a bring-your-own-key path keeps power users in control of cost and provider choice.

- 01Implemented chunk-level citations so every answer links back to the exact source paragraph, not just the document — making hallucination visible rather than hidden.

- 02Bring-your-own-key support required abstracting the LLM client behind a provider interface, which also made it straightforward to add model switching later.

- 03Used Cloudflare for edge delivery of the frontend so load times are fast globally without managing regional infrastructure.

What it does.

Every answer links back to the exact chunk from your uploaded PDF, note or URL — so you can verify rather than trust.

Power users can supply their own OpenAI API key to control cost and avoid usage caps, while casual users get a hosted free tier.

Upload PDFs, paste notes, or provide URLs. Kognix processes and indexes them all into a unified knowledge base you can query conversationally.

Full feature list (2 more)

- 01Simple onboarding and dashboard

- 02Retrieval-augmented generation pipeline

What it shipped.

Launched publicly on Railway and Cloudflare. The bring-your-own-key flow had a higher activation rate than expected — cost-conscious users appreciated the transparency. Source citations were consistently mentioned as the key differentiator over general-purpose chatbots.

Built with.

FastAPI-inspired REST framework, published to PyPI — built to understand framework internals from the ground up.